OpenGL

OpenGL is an open-source, programmable graphics pipeline used for game making, art and design, visualization, and more. Initially released in the early 90s, OpenGL is one of the most used and most standardized software packages in the world. OpenGL is extremely powerful because it allows developers to program software to run directly on the graphics cards. Graphics cards are designed to have extreme processing and parallel computation capabilities, which is what allows users to render photorealistic media in real-time.

In addition to in strength computationally, OpenGL is primarily written and maintained by graphics card manufacturers. This allows nearly complete standardization of graphics pipelining across all PCs, meaning developers don’t have to worry about cross-compatibility. In fact, virtually all modern PCs have some version of OpenGL installed, whether users are aware of it or not.

OpenGL exists as an API in the C language. This allows it to have low-level control over specifics of the graphics card. However, wrapper packages exist to provide virtually identical APIs to other languages such as Java, C++, Python, JavaScript, and more. Practically any programmer with strong knowledge in a common programming language can get started in OpenGL.

Early versions of the OpenGL render pipeline were not considered programmable. Developers were unable to control specifics of the render calculations. For example, while developers could create a “light” object at a certain point on the screen, they had little control over how lighting calculations were made. The release of OpenGL 3.0 marked the creation of what’s called OpenGL’s modern programmable render pipeline, giving developers much greater control over data stored on the graphics card and render calculations performed on such data. This version was also made forward compatible, meaning all applications programmed for OpenGL 3.0+ will work for users who have a previous 3.0+ version installed. While this abstraction of the render pipeline made it much more difficult to understand and write software using OpenGL, developers now have much greater control over how the graphics card is utilized. If you’re interested in learning how to use OpenGL, I’ve left a link to a great tutorial below. The tutorial is designed for C++, but as stated earlier, the OpenGL API is virtually identical in all languages, making it very easy to follow along regardless:

Learn OpenGL – https://learnopengl.com/Introduction

Applications

Games and Game Engines

The selling point of many modern video games is their high quality, photorealistic graphics. Graphics card hardware has greatly evolved over the past few decades, but the hardware is useless without software that can utilize it. OpenGL is a common choice for computer graphics in video games and game engines. Vertex data called meshes, a series of points in 3D space, define the structure of objects such as a player, buildings, weapons, etc. 2D images called “textures” can be mapped onto the meshes to create the visible components of each object, such as the player’s face or the weapon’s handle. When a user does something like move their camera or shoot a weapon, the render calculations are adjusted to update what’s visible on the screen or the player’s posture, respectively. Synchronizing these calculations between all objects on the screen creates a large, complex scene with great artistic detail. OpenGL is one of the few options powerful enough to render such scenes in real-time.

Simulation and Visualization

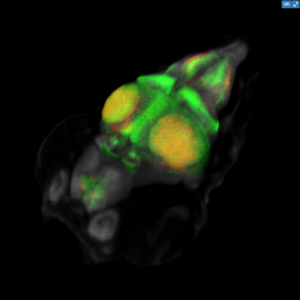

OpenGL can be a useful tool for simulation and data visualization. Data, such as medical imaging data, can be loaded in from a file and stored in OpenGL’s pipeline. From there, the developer will have free reign over how this data is rendered. Maybe different colors should correspond to different datasets. Perhaps more significant data points should appear larger than others. OpenGL’s render pipeline offers an incredible amount of flexibility in terms of how data is simulated or visualized. One good example of this is the project I worked on through my internship at NIH last summer. I used X3DOM to create a visualization tool for 3D genetic imaging data in zebrafish. X3DOM is a set of open-source graphics libraries built on top of WebGL, which is built on OpenGL (it’s not uncommon for OpenGL applications to have multiple layers like this). I will further discuss WebGL and its relation to OpenGL in the next section. If you’re interested in the example, visit the link below:

Zebrafish Brain Browser – http://zbbrowser.com

WebGL

WebGL is the “more modern” version of modern OpenGL. It’s built on OpenGL ES, a version of OpenGL made for embedded systems like smartphones, tablets, and more. WebGL is, as you may have guessed, the web edition of the API. Written in JavaScript, the WebGL API, like other language wrappers, is extremely similar to the original OpenGL API. Developers can use WebGL to program render pipelines through the HTML5 canvas tag. Graphics card programming in a web browser allows for massively cross-compatible and accessible applications, as developers don’t have to worry about platform-specific compilation, the web browser does it for them. Below are a few examples of how WebGL has been used. If you want to get started with WebGL, here’s a link to the documentation:

WebGL Documentation – https://developer.mozilla.org/en-US/docs/Web/API/WebGL_API

Game Development

Many libraries built on WebGL exist for web browser game development. These libraries typically provide actions like texturing, animation, and graphical effects without having to worry about specifics of the shader code or data buffers. Two examples are Phaser and three.js. Phaser is a 2D JavaScript game engine that has great documentation and tutorials, making it very easy to learn game development. The three.js library features a more complex engine and is great for making 3D games in the web browser. Here are links to both if you’re interested:

Phaser – http://phaser.io/

three.js – https://threejs.org/

Virtual Reality

The WebVR API supports rendering to VR devices such as the Oculus Rift and HTC Vive. While not directly dependent on the WebGL API, the two work very well together. One great example that uses both is A-Frame. A-Frame is an HTML5 framework that utilizes WebVR for streaming to virtual reality headsets, while its high speed, real-time rendering calculations are handled by WebGL. A-Frame as an API makes it very easy to set up a scene in VR and start having fun. If you’re interested in getting started with VR, A-Frame is a great place to start. I’ve shared some helpful links below:

WebVR API – https://developer.mozilla.org/en-US/docs/Web/API/WebVR_API

A-Frame – https://aframe.io/

Unity

Unity is one of the most popular game engines in the world. Some of the best games ever made were developed in Unity, and it’s a very commonly used tool among game developers. It just so happens that Unity supports one-click exporting to WebGL. Create your game in Unity and you can build it for your browser. Similarly to Phaser, it has great documentation and tutorials available online, making it relatively easy to pick up, even if you have no experience in game development. Here’s some links to get you started:

Unity Tutorials: https://unity3d.com/learn/tutorials

Unity Documentation: https://docs.unity3d.com/Manual/index.html

Final Thoughts

OpenGL has served as a foundation for graphics programming for decades. What makes OpenGL so powerful is its incredible standardization. Virtually every modern PC and graphics card supports OpenGL. Additionally, OpenGL allows incredible control over data that gets stored on the graphics card and calculations performed on it. Anyone with programming knowledge can get started with OpenGL, and I encourage you to do so.

Image Sources

OpenGL Logo – https://medium.com/@wrongway4you/what-is-opengl-and-how-to-start-learning-it-34f19cfa219f

Witcher 3 – https://www.youtube.com/watch?v=Z8LrfUDhsEw

Phaser Game – http://phaser.io/news/2018/10/infinity-land

A-Frame Game – https://vrroom.buzz/vr-news/guide-vr/how-use-webvr-firefox-vive-or-rift

Unity Logo – https://logojoy.com/unity_logo_logotype_unity_3d/