[1] Lelkes, Y., Sood, G., & Iyengar, S. (2017). The hostile audience: The Effect of Access to Broadband Internet on Partisan Affect. American Journal of Political Science, 61(1), 5-20.

In this paper, Lelkes et al. used exogenous variation in access to broadband internet stemming from differences in right-of-way laws, which significantly increase the access to content because it affects the cost of building internet infrastructure and thus the price and availability of broadband access. They used this variation to identify the impact of broadband internet access on partisan polarization. The data was collected from various sources. The data on right-of-way laws come from an index of these laws. The data on broadband access is from the Federal Communication Commission (FCC). For data on partisan affect, they use the 2004 and 2008 National Annenberg Election Studies (NAES). For media data, they use comScore and they use Economic Research Service’s terrain topology to classify the terrain into 21 categories. The authors find that the access to the broadband internet increases partisan hostility and boosts partisans’ consumption of partisan media. The results of their study show that if all the states had adopted the least restrictive right-of-way regulations, partisan animus would have been roughly 2 percentage more.

I really liked reading the paper and their analysis was very thorough. While reading the paper, I had various doubts regarding the analysis but they kept on clearing those doubts as I kept on reading. For example, when they compared the news media consumption using broadband and dial-up connection, they did not discuss the number of users in each group of 50,000 users but later they use CEM that reduces the imbalance on set covariates. CEM (Coarsened Exact Matching) seems like a good technique for the imbalance in the dataset. CEM is a Monotonic Imbalance Bounding (MIB) matching method which means that the balance between the treated and control groups is chosen by the user based on forecasts rather than discovered through the usual laborious process of checking after the fact and repeatedly re-estimating.

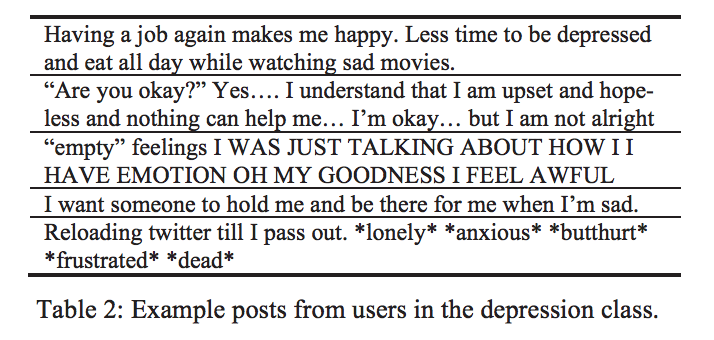

Anyway, the authors still made many assumptions in the paper. People visiting a left-leaning website are considered left-leaning and vice versa. Can we naively assume that? I have been on Fox news a few times and if I was under study at that period of time, I would be considered a conservative (which I am not). Secondly, the 50,000 panelists knew that they were under study and their browsing history was recorded. This might have induced bias because people who know that their activity is monitored won’t browse as they normally do. Another interesting thing in the paper was that only 3% of Democrats say that they regularly encounter liberal media whereas, 8% of Republicans say the same. In case of Republicans, it is more than double. What could be the reason? Even in dial-up, the difference is significant between groups and secondly, does that mean that Republicans are more likely to polarize? This difference reduces in case of broadband (19% and 20%).

Another interesting analysis was performed on internet propagation with respect to the terrain. Though the authors did not claim any such thing, they separately say three things:

- There are fewer broadband providers where the terrain is steeper

- More broadband providers increase the use of broadband internet

- People with broadband access consume a lot more partisan media especially from sources congenial to their partisanship

Considering these three claims, it can be inferred that the places with less steep terrain have more polarization. Can we claim that? San Francisco, Seattle, and Pittsburgh are considered the hilliest places in the US. Does that mean that people in New York are more polarized than the people in these three places? Also, do more people in New York City have access to broadband than the people in Seattle?

Also, people with more money will have access to the better internet. Therefore, it can also be interred that rich people are more liable to polarize than the poor. Whereas, it is not generally true (I assume). Education is directly proportional to income in most cases and hence more educated will have access to the better internet because they can afford it. I can indirectly infer that education results in polarization just because educated people can afford better internet. Now, this claim seems funny, and hence I think their study too. I think that the assumption that “anyone who visits the left-leaning websites is left-leaning” is not the correct way to go about this study.