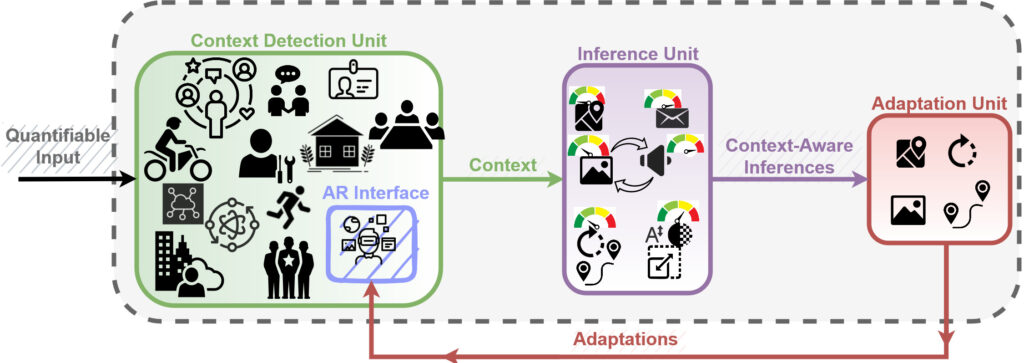

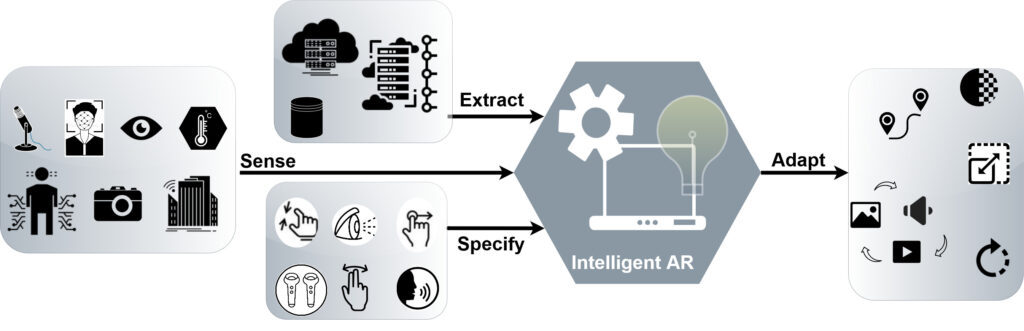

We have developed a holistic framework for the design of Intelligent AR interfaces. Using our proposed taxonomy of everyday AR contexts we proposed a framework for the design of an intelligent AR interface to infer users’ wants and needs and predict the desired adaptations to the AR interface design dimensions, virtual content, and interaction techniques. Depending on the context, the intelligent interface may make general adaptations to the whole system, or to individual apps.

This work is in preparation for publication.

[DC] Context-Aware Inference and Adaptation in Augmented Reality Conference

2022 IEEE Conference on Virtual Reality and 3D User Interfaces Abstracts and Workshops (VRW), 2022.